Hello Full Stack PMs!

Welcome to the Weekly Stack, serving up the hottest AI developments fresh off the griddle for AI builders. We've got 2,519 new stackers since the last send – welcome to the stack! 🥞

These last two weeks were crazy. February 5th alone would've been enough for three newsletters: Anthropic shipped Claude Opus 4.6 and OpenAI dropped GPT-5.3-Codex within thirty minutes of each other. Meanwhile Cowork plugins triggered a $285 billion SaaS stock selloff. Not a typo.

Two big stories this week plus some updates on what I'm building.

Here's what we're covering:

My stuff – New GSD module, published my OS workflow, and a preview of what's coming

The Model Showdown – Opus 4.6 vs GPT-5.3-Codex, and what I've learned using both

The $285B SaaSpocalypse – Cowork plugins crashed the stock market. Kind of.

Let's do this.

📣 The Best Way to Use Claude Code

The sponsor for this newsletter is a product I actually use every single day:

Nimbalyst: The best way to use Claude Code (it’s also free!)

Nimbalyst gives Claude Code a UI, solving all the major challenges you might have with it:

Installation: Installing Claude Code is weirdly hard. With Nimbalyst, you just need to grab a key from your claude.ai account.

Editing documents: Editing raw markdown is terrible. Nimbalyst gives you a nicely formatted, directly editable markdown files.

Viewing CSVs: IDEs don’t support CSVs natively. In Nimbalyst, it’s a first class experience.

And, again, it's completely free! My Claude Code for PMs and Claude Code for Everyone courses can be completed in about 5 hours and work perfectly in Nimbalyst.

If you already use Claude Code and want a nicer UI, or if you haven’t Claude Code because you’re intimidated by the terminal, Nimbalyst is genuinely great.

🍳 Fresh Off the Griddle

A few things from my world.

New Claude Code module: GSD (Get Shit Done) for advanced vibe coding. GSD is one of the most popular vibe coding frameworks and I built a full course module for it. It interviews you on exact requirements, breaks work into atomic plans, executes them in parallel, and runs QA to make sure everything actually works. If you've been vibe coding and hitting a wall on larger projects, this is the upgrade. LINK

Published my operating system with Aman Khan. I wrote a full breakdown of how I set up my personal OS in Claude Code – my exact workflow for structuring everything in Claude Code and Cursor. If you've ever wondered how I actually use these tools day-to-day, this is the post.

Taking a step back to build something bigger. The course modules have been coming out one at a time, and some of the earlier ones are already getting a little out of date (that's how fast things move, bye ultrathink 🥲). So I'm stepping back to plan a whole new set of modules as one cohesive thing instead of releasing them piecemeal.

I spent over $200 ingesting every top Claude Code resource I could find – articles, Reddit threads, YouTube videos, official docs, all of it – and I'm building something pretty epic. Big things are coming.

🥊 The Model Showdown: Opus 4.6 vs GPT-5.3-Codex

February 5, 2026. Anthropic and OpenAI dropped their flagship models within 30 minutes of each other. There’s never been a day like this in AI.

The headlines: 1M token context window , 128K max output tokens, and a new "Agent Teams" feature where multiple Claude agents split tasks and work in parallel. It tops benchmarks on Terminal-Bench 2.0, Humanity's Last Exam, and BrowseComp.

I've been using it heavily. WAY less mistakes than Opus 4.5, and really great strategic thinking. Not just code – strategy, planning, analysis. It's the first model where I actually trust the strategic output without heavy editing. That said, it's very token-heavy. Even though the context window is bigger, it gets eaten up way more easily so it's kind of a wash on that front imo.

OpenAI merged coding, reasoning, and professional knowledge into one model. 25% faster than its predecessor (but it’s still slow). I LOVE mid-turn steering – you can redirect the model while it's working without losing context. It feels much more like real collaboration and I expect it will be a standard feature of next-gen LLMs.

I've been using this one too. REALLY strong for coding tasks. Deep explorations of the codebase, and it checks its own work in a way that feels different from previous models. When you know exactly what you want to build, it will knock it out of the park. But it's very, very slow. You need the task well-defined before you send it off or you'll be waiting around forever for an output you didn't actually want.

The early consensus from the community: for reliable coding results, Codex is edging out Opus for a lot of people. But Claude Code uses 5.5x fewer tokens for equivalent tasks, which matters when you're watching costs.

New models → Check your mental model

Something I've been thinking about a lot lately: Using LLMs is mostly intuitive work. You develop a feel for how they work by actually using them. But the hard part is that the models keep changing and improving, and that means your intuition can become wrong without you realizing it.

For me, I've long believed that LLMs weren't really helpful for coming up with their own strategies – like, they could organize your thoughts, but the actual strategic output was mediocre. After testing Opus 4.6 with some genuine strategic work just based on inputs... I don't think that's true anymore. And I don't even know when it stopped being true. Was it Opus 4.5? Earlier? I genuinely don't know. I just know my old assumption was wrong.

The lesson: you have to keep using these tools and pushing the boundaries, or your intuition goes stale. The models are moving faster than your mental model of them.

💥 The $285B SaaSpocalypse

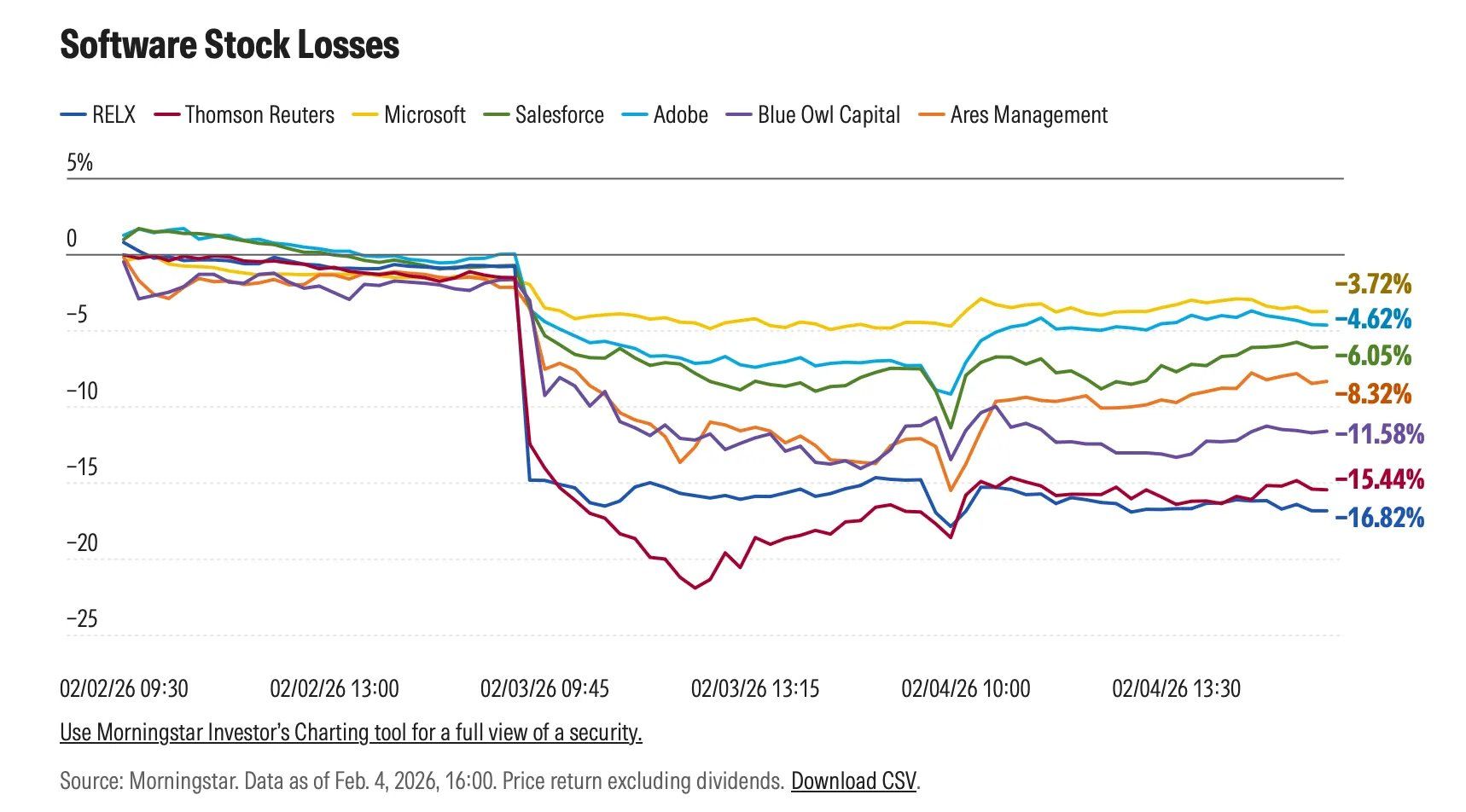

Claude Cowork launched industry-specific plugins for legal, finance, and sales. The stock market freaked out. Thomson Reuters dropped 16%. LegalZoom fell 20%. Goldman Sachs' software basket had its worst day since April. Roughly $285 billion in SaaS market cap evaporated.

I assumed it was a coincidence at first – something else must have caused it. But no, the actual analysis from multiple firms points to the Cowork plugins as the direct catalyst. That's a lot of weight for a set of research preview plugins to carry.

IMO the market overreacted short-term – these are early-stage previews, not production enterprise tools. But the direction is real. If your SaaS product's core value is "take data from here, put it there, format it like this," an AI agent on someone's desktop can already do that.

PMs at SaaS companies should be stress-testing which workflows are most vulnerable right now. My main recommendation is still to learn Claude Code – Cowork is powerful but early. If you want to go deep on it though, I have a complete guide at ccforeveryone.com/cowork.

😂 Meme of the week

This is what I mean by not-production-ready:#

🔹 Advanced AI Product Management

While I’m becoming an expert at using and teaching the AI tools, I’ve realized I need a deeper understanding of how AI actually works to make the most of this crazy technology and make my own AI stuff.

I’ve been been doing Product Faculty’s AI PM Certification. The combo of great content + practical Build Labs to apply the content has made this one of the best courses I’ve taken. The price increases to $3,000 this Sunday, I’ve partnered to get a $500 discount code for readers of The Full Stack PM.

Use this link or code CARL to get it.

That code and enrolling by Sunday will save you $1000.

Next cohort starts 1/26.

🥞 The Last Pancake

Your one task this week: pick one of the new models and test an assumption you have about what AI can't do. It might not be true anymore. Your intuition might be wrong. Mine was.

Ask Opus 4.6 to build a strategy for something – have it actually come up with a strategy from scratch based on inputs. See if it's still mediocre.

Give Codex a well-defined coding task and walk away – something you'd normally babysit. Let it check its own work. See what comes back.

Try Claude Code or Cowork on a file-heavy workflow – expense reports, research organization, drafting from notes. See if your SaaS tool is really doing more than Cowork can.

Keep building,

Carl 🥞